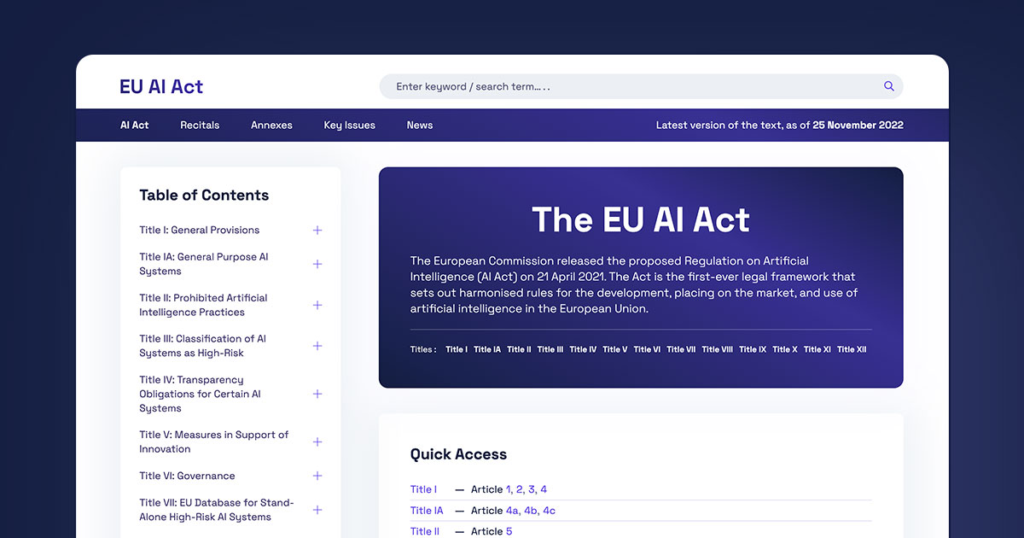

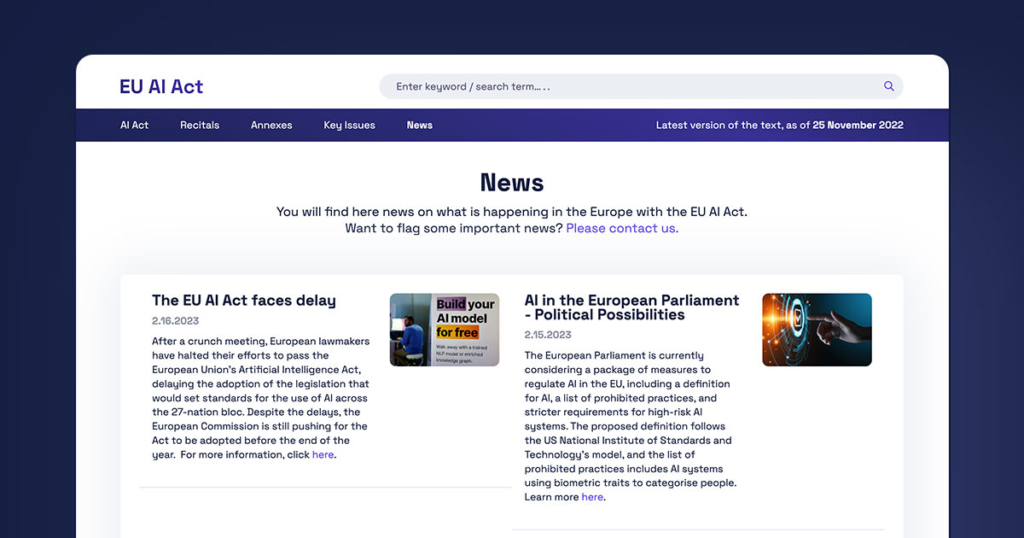

Latest EU AI Act News (Updated 2026)

The European Commission has moved from drafting to execution. The EU AI Act officially entered into force in 2024, but 2026 news is focused on practical enforcement, guidance, and deadlines.

Recent updates confirm three things. First, prohibited AI systems bans are approaching enforcement stage. Second, rules for general-purpose AI (GPAI) are being finalized with clearer thresholds. Third, national regulators are preparing audits and penalties frameworks.

At the same time, the European Parliament is pushing for tighter coordination between member states. This matters because enforcement will not be centralized. It will happen at the country level.

Now the key question follows: What exactly applies today, and what is coming next?

EU AI Act Timeline — What’s Already in Force vs What’s Next

The timeline is no longer theoretical. It is operational.

- 2024: Law entered into force

- Early 2025: Rules on prohibited AI begin applying

- 2026: GPAI and transparency obligations expand

- 2027: Full compliance for high-risk systems required

The biggest shift in recent news is clarity on phased enforcement. Companies now have defined windows, not vague expectations.

This leads directly to the next issue: What actually changed in the latest updates?

Breaking Changes in Recent EU AI Act News

Recent developments focus heavily on generative AI and foundation models.

- Providers must publish training data summaries

- AI-generated content must be clearly labeled

- Compute-based thresholds are being used to define “systemic risk” models

Companies like OpenAI and Google fall under these rules when operating in the EU market.

Another update is enforcement clarity. Regulators are aligning penalties with GDPR-style fines, reaching up to 7% of global annual turnover for serious violations.

So the regulation is not just broad. It is financially enforceable.

Next, the focus shifts from law to impact.

Who Is Most Affected Right Now

Not every company faces the same pressure.

- AI startups: Must document models from day one

- SaaS platforms: Need to audit embedded AI features

- Enterprises: Must review internal AI use (HR, finance, risk scoring)

- Non-EU companies: Covered if they serve EU users

The extraterritorial scope is critical. It mirrors how GDPR expanded globally.

This naturally leads to the most practical question: What should companies do immediately?

Immediate Compliance Actions (Based on Latest News)

Start with classification. Without it, nothing else works.

- Map all AI systems in use

- Classify risk level (prohibited, high-risk, limited)

- Document datasets, training methods, and outputs

- Prepare audit trails and logging systems

- Assign internal AI accountability roles

According to EU estimates, over 30% of enterprise AI systems may fall into “high-risk” categories. That is not a small segment.

Once classification is done, the next layer becomes clearer: high-risk obligations.

High-Risk AI Systems — Updated Requirements

High-risk systems are the enforcement priority.

Examples include:

- Hiring and recruitment tools

- Credit scoring systems

- Medical AI applications

Requirements now include:

- Risk management systems

- Human oversight mechanisms

- Accuracy and robustness testing

- Continuous monitoring post-deployment

Missing any of these can trigger compliance failure.

But the real complexity appears in generative AI.

Generative AI & Foundation Models — New Rules Explained

The EU AI Act introduces a separate layer for foundation models.

Key obligations:

- Publish training data summaries

- Prevent illegal content generation

- Clearly label AI-generated outputs

For large-scale models, additional rules apply:

- Risk assessments

- Cybersecurity safeguards

- Energy usage disclosures

This directly affects companies building large models, not just using them.

Now comes the enforcement reality.

Penalties and Enforcement (Latest Updates)

The penalty structure is strict and already defined.

- Up to €35 million or 7% of global turnover

- Lower tiers for non-compliance with documentation or transparency

Each EU country will appoint its own enforcement authority. However, coordination mechanisms are being built to ensure consistency.

This creates a situation where compliance is not optional and not easily delayed.

Still, confusion remains in the market.

Common Misinterpretations in Current EU AI Act News

Several misunderstandings are slowing compliance.

- Not all AI is regulated. Only risk-based categories apply

- It is not a replacement for GDPR. It complements it

- “High-risk” is context-based, not industry-based

Clearing these misconceptions saves time and cost.

To understand the difference better, compare it directly with GDPR.

EU AI Act vs GDPR — What’s New in Regulation Approach

The General Data Protection Regulation focuses on data privacy.

The EU AI Act focuses on AI system behavior and risk.

Key difference:

- GDPR regulates data usage

- AI Act regulates decision-making systems

They overlap when AI uses personal data, but they are not interchangeable.

For background, see this overview on <a href=”https://en.wikipedia.org/wiki/Artificial_intelligence” target=”_blank”>Artificial Intelligence</a>.

Now the practical planning begins.

What Businesses Should Prioritize in the Next 90 Days

Focus on execution, not theory.

- Build an AI inventory

- Conduct gap analysis vs EU AI Act requirements

- Review third-party AI vendors

- Allocate budget for compliance tools

Early movers reduce long-term cost.

Late movers face rushed audits and higher risk.

Looking ahead, more changes are expected.

Future Outlook — What to Watch in Upcoming EU AI Act News

Three developments are critical:

- Technical standards from EU bodies

- First enforcement cases (expected to shape interpretation)

- Alignment with global AI regulations

The EU AI Act is likely to become a global benchmark, similar to GDPR.

FAQs

When will the EU AI Act fully apply?

By 2027, with phased obligations starting from 2025.

Who must comply outside the EU?

Any company offering AI services to EU users.

What is a high-risk AI system?

AI used in sensitive areas like hiring, finance, or healthcare.

What are the penalties?

Up to 7% of global annual turnover.

Visual Overview

Final Takeaway

The EU AI Act has moved from policy to enforcement.

Deadlines are fixed. Obligations are defined. Penalties are enforceable.

Companies that act early focus on classification, documentation, and governance.

Those that delay will face compliance under pressure, not planning.

The next updates will not redefine the law. They will define how strictly it is enforced.