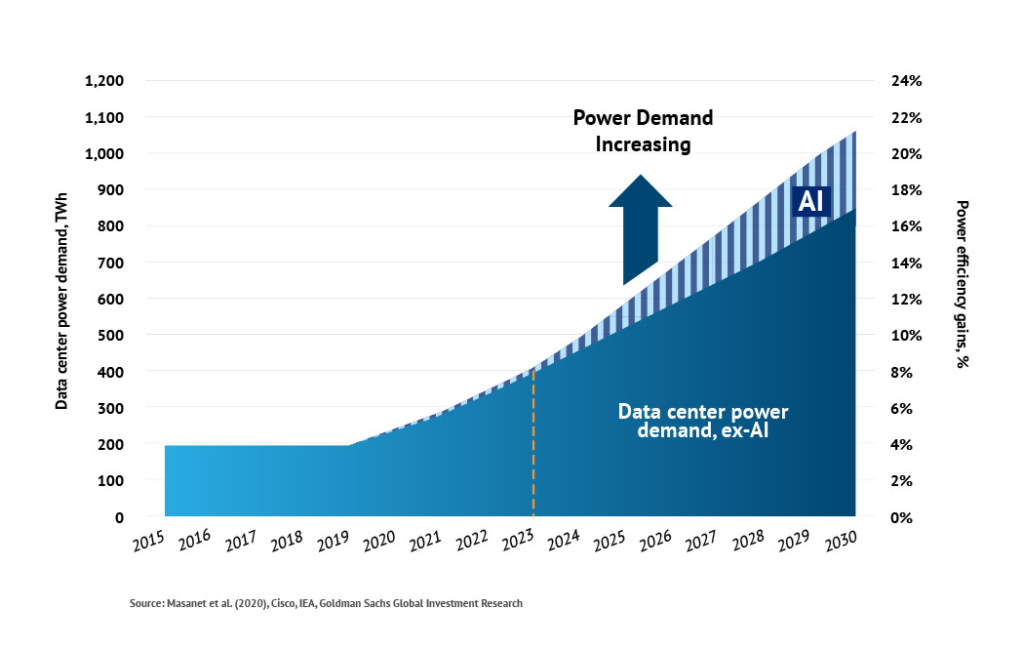

AI data centers are consuming energy at a pace that is outstripping grid expansion in several regions. Training a single large AI model can use 1–5 GWh of electricity, while high-demand inference systems now require megawatt-scale clusters per deployment. Major cloud providers are locking in long-term renewable and nuclear energy deals, yet grid bottlenecks are delaying new AI infrastructure by 12–36 months in some areas. At the same time, electricity costs have risen 20–40% for data center operators in key markets, directly impacting AI pricing.

That’s the current situation. But the real issue isn’t just rising consumption—it’s how quickly AI workloads are changing the structure of energy demand. Traditional data centers were predictable. AI clusters are not. They create sudden spikes, high-density loads, and cooling stress that existing systems weren’t designed for.

This is where the story shifts from “news” to practical impact. If you use AI tools, build on cloud platforms, or operate infrastructure, these energy constraints are already shaping pricing, availability, and performance. The next sections break down what’s actually driving this shift—and what to do about it.

Why AI Is Driving a Global Energy Surge

AI workloads are fundamentally different from traditional cloud computing. Training large models requires continuous high-power usage over days or weeks, while inference (running AI tools) creates constant, distributed demand across regions.

For context, a standard data center rack used to operate at 5–10 kW. AI racks now exceed 50–100 kW, with some clusters pushing beyond that. This 10x jump changes everything—power delivery, cooling, and cost models.

This shift explains why energy has become the limiting factor—not hardware. GPUs can be deployed quickly. Power infrastructure cannot. And that’s where the bottleneck begins.

Biggest Energy Challenges Facing AI Data Centers

Power Availability Is the Primary Constraint

Utilities in the US, Europe, and Asia report multi-year delays for new grid connections. AI companies are competing with industrial users for limited capacity. In some regions, projects are paused simply because power cannot be delivered on time.

Cooling Systems Are Reaching Their Limits

Air cooling is no longer sufficient for dense AI clusters. Liquid cooling adoption is accelerating, but retrofitting existing facilities is expensive and slow. Without it, energy efficiency drops sharply.

Operational Costs Are Rising Fast

Electricity now represents 40–60% of total data center operating costs for AI-heavy workloads. This directly impacts:

- API pricing

- Enterprise AI subscriptions

- Cloud compute rates

The result is simple: AI is getting more expensive to run, even as models improve.

Latest Innovations Solving AI Energy Demand

The industry isn’t standing still. Several solutions are already being deployed.

Liquid & Immersion Cooling

Liquid cooling reduces energy used for temperature control by up to 30%. It also allows higher compute density without overheating. Adoption is accelerating in new AI-focused facilities.

AI Chip Efficiency Gains

New AI chips are improving performance per watt by 2–4x compared to previous generations. This doesn’t reduce total energy use—but it slows the rate of increase.

Workload Optimization

AI jobs are now being scheduled based on energy availability, not just compute demand. For example, training tasks may run when renewable energy supply is high or electricity is cheaper.

This leads directly to the next issue: where that energy is coming from.

Renewable Energy Deals Powering AI Growth

Major tech companies are signing Power Purchase Agreements (PPAs) for solar and wind energy at unprecedented scale. These deals secure long-term pricing and help offset emissions.

However, renewables introduce a new constraint: intermittency. Solar and wind don’t produce constant power. AI systems, on the other hand, often require stable, uninterrupted energy.

This mismatch is why companies are now exploring a different option.

Nuclear Energy and AI Data Centers

Nuclear energy is re-entering the conversation because it provides consistent, high-output power. Small Modular Reactors (SMRs) are being considered for future AI infrastructure.

Unlike renewables, nuclear can support 24/7 base load demand, which aligns better with AI workloads. However, deployment timelines are long, and regulatory approval remains a challenge.

To understand the broader context of nuclear energy, see this overview on Wikipedia:

The takeaway: nuclear is promising, but it’s not an immediate fix.

Real Cost Impact: Who Pays for AI Energy Demand?

The cost ripple effect is already visible.

- Businesses face higher AI integration costs

- Developers see increased API pricing

- Consumers experience rising subscription fees

In some cases, AI service pricing has increased 10–25% year-over-year, largely due to infrastructure and energy costs.

This trend is unlikely to reverse in the short term.

Environmental Impact: Is AI Undermining Climate Goals?

AI’s carbon footprint is under scrutiny. Large models can emit hundreds of tons of CO₂ during training, depending on the energy source.

While companies claim progress through renewable offsets, the reality is mixed. Some improvements are real. Others rely on accounting methods rather than actual emission reductions.

Regulators are starting to respond. New reporting requirements in parts of Europe and North America aim to increase transparency around AI energy use.

Regional Hotspots: Where AI Data Centers Are Expanding

Location is becoming a strategic decision.

- US & Northern Europe: Strong infrastructure but grid saturation

- Middle East: Abundant energy, growing investment

- Asia: Rapid expansion with mixed regulatory environments

Regions with cheap, stable electricity and cooler climates have a clear advantage. This is why geographic diversification is increasing.

What Businesses Should Do Now

If you rely on AI, energy is now part of your cost strategy.

- Choose vendors with transparent energy usage metrics

- Optimize workloads to reduce unnecessary compute

- Monitor cost per inference, not just total usage

- Plan for continued price increases over the next 2–3 years

Ignoring these factors leads to unpredictable costs and scalability issues.

What to Watch Next (2026–2028)

Several developments will shape the next phase:

- More efficient AI models requiring less compute

- Grid upgrades in high-demand regions

- Increased use of hybrid energy strategies (renewable + nuclear)

- Policy changes affecting data center expansion

The key variable is not AI capability—it’s energy availability.

AI Data Center Energy Infrastructure (Example)

FAQ: AI Data Center Energy

How much energy do AI data centers use?

Large AI facilities can consume 100–500 MW, comparable to small cities.

Why is AI energy demand increasing so fast?

Higher compute intensity, larger models, and constant inference workloads.

Are renewable sources enough?

Not alone. Intermittency limits reliability for continuous AI operations.

Will AI make electricity more expensive?

In some regions, yes—due to increased demand and infrastructure strain.

What is the most efficient AI infrastructure today?

Liquid-cooled data centers using high-efficiency AI chips and optimized workloads.

Final Takeaway

AI data center energy news is not just about growth—it’s about limits. Power availability, not compute, is becoming the defining constraint. Companies that adapt early—by optimizing usage and understanding energy costs—will avoid the biggest risks as AI infrastructure continues to scale.